Datasets

Using the Datasets screen, users are now able to manage daily RDFs for any Dataset in the system. On top of that, the Datasets provides to ability to associate a Metadata Defenition with a Dataset enabling tagging of services.

CAUTION: Deleting a Daily RDF or entire Dataset is an irreversible operation.

Deleting days in a Dataset

In order to remove certain days (or: Daily RDFs) from a Dataset, browse to the Data Pipelines > Datasets menu and select the Dataset from which you want to delete certain days.

Then click the red trash bin icon next to each day for which you want to remove its data:

-6cc92b26eb137abaff8c534d620ab809.png)

Once each day has been set to delete, confirm your selection by clicking the Update button:

-013f89fd5af6dc03b120699ff95dd24f.png)

Deleting an entire Dataset

In order to delete the entire Dataset the Delete button in the lift bottom corner can be used:

-4220773d39e6c9a47b7243d25a8cc980.png)

After confirmation the entire Dataset will be deleted from disk.

Associating Metadata to a Dataset

To use Metadata for Services, a Metadata Defenition needs to be associated with the corresponding Dataset. This can be achieved by opening the Data Pipelines > Datasets management screen, then selecting the Dataset for which you want to configure Metadata:

-6e3cbee5d8b7b10194fbdb390953484f.png)

Afterwards, click the Update button to ensure your changes are saved. It is possible to configure Metadata values for all the Services in this Dataset.

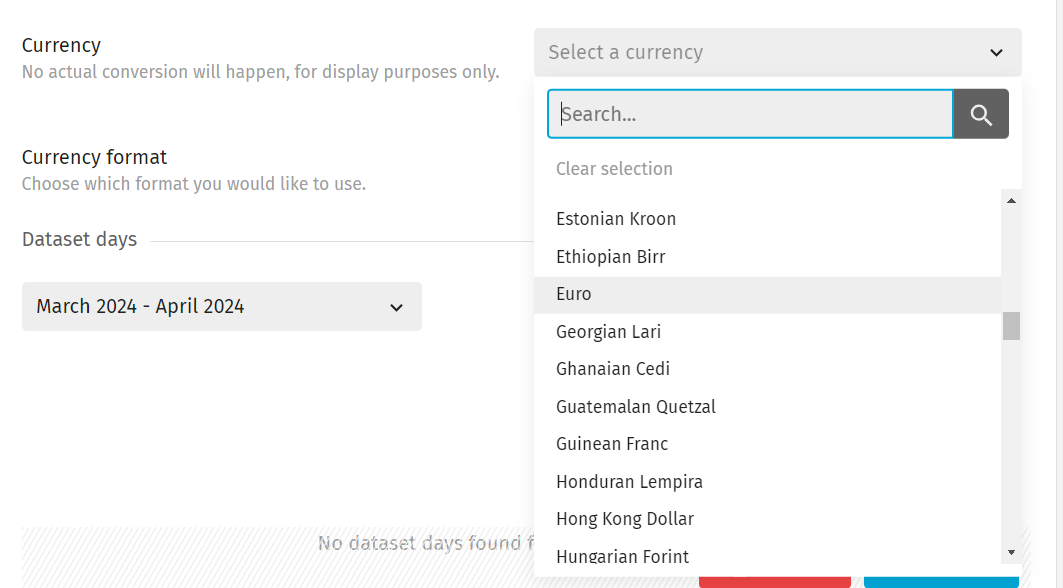

It is also possible to configure a currency for the dataset. No actual conversion will happen, but this feature allows reports to display the configured currency settings for its associated dataset:

NOTE: The currency configuration is a Beta feature. Make sure to enable Beta features in the Settings in order to use it.

Dataset lifecycle

CSV files

CSV files are a way of storing data in a table format. A table consists of one or more rows, each row containing one or more columns. There is no formal specification for CSV files although a proposed standard can be found at https://www.ietf.org/rfc/rfc4180.txt.

Although the 'C' in 'CSV' stands for the word comma, other characters may be used as the separator. TAB and semicolons are common alternatives. Exivity can import CSVs using any separator character apart from a dot or an ASCII NUL and uses the comma by default.

Datasets

A Dataset is a CSV file, usually produced by USE, which can be imported for processing by a Transcript task. To qualify as a dataset a CSV file must meet the following requirements:

- The first line in the file must define the column names

- Every row in the file must contain the same number of fields

- A field with no value is represented as a single separator character

- The separator character must not be a dot (

.) - There may be no ASCII NUL characters in the file (a NUL character has an ASCII value of 0)

When a Dataset is imported by a Transcript task any dots (.) contained in the column name will be replaced with underscores (_). This is due to a dot being a reserved character used by Fully Qualified Column Names

When a DSET is exported during the execution of a Transcript task, the exported CSV file will always be a Dataset, in that it can be imported by another Transcript task.

Although datasets are generated by USE as part of the extraction phase, additional information may be provided in CSV format to enrich the extracted data. This additional CSV data must conform to the requirements above.

DSETs

A DSET is the data in a Dataset once it has been imported by a Transcript task. A DSET resides in RAM during the Transform phase and, if referenced by a finish statement, is then stored in a database file for long-term use.

A Transcript task may import more than one dataset during execution, in which case multiple DSETs will be resident in RAM at the same time. It is, therefore, necessary for any subsequent Transcript statement which manipulates the data in a DSET to identify which DSET (or in some cases DSETs) to perform the operation on. This is achieved using a DSET ID which is the combination of a Source tag and an Alias tag.

If multiple DSETs are present in memory, the first one that was created will be the default DSET. Column names that are not fully qualified are assumed to be located in the default DSET.

Source/Alias tags and DSET IDs

After a Dataset has been imported the resulting DSET is assigned a unique identifier such that it can be identified by subsequent statements. This unique identifier is termed the DSET ID and consists of two components, the Source and Alias tags.

The default alias tag is defined automatically and is the filename of the imported Dataset, minus the file extension. For example, a Dataset called usage.csv will have an alias of usage.

The Transcript import statement can take one of two forms. Depending on which is used, the Source tag is determined automatically, or specified manually as follows:

Automatic source tagging

By convention Datasets produced by USE are located in sub-directories within the directory <basedir>\collected. The sub-directories are named according to the data source and datadate associated with that data. The naming convention is as follows:

<data_source>\<yyyy>\<MM>\<dd>_filename.csv

where:

<data_source>is a descriptive name for the external source from which the data was collected<yyyy>is the year of the datadate as a 4-digit number<MM>is the month of the datadate as a 2 digit number<dd>is the day of the month of the datadate as a 2 digit number

When importing one of these datasets using automatic source tagging, the source tag will be the name of the directory containing that dataset. Thus, assuming a datadate of 20160801 the following statement:

import usage from Azure

will import the Dataset <basedir>\collected\Azure\2016\08\01_usage.csv, and assign it a Source tag of Azure.

Manual source tagging

When importing a Dataset from a specified path, the Source tag is specified as part of the import statement. For example the statement:

import my_custom_data\mycosts.csv source costs

will import the Dataset <basedir>\my_custom_data\mycosts.csv and assign it a Source tag of costs. Checks are done during the import process to ensure that every imported Dataset has a unique source.alias combination.

Fully Qualified Column names

When a column name is prefixed with a DSET ID in the manner described previously, it is said to be fully qualified. For example the fully qualified column name Azure.usage.MeterName explicitly refers to the column called MeterName in the DSET Azure.usage.